2024 (@2024@leonfurze.com)

More From the Fence: Articles For and Against AI in Education

A few weeks ago I wrote an article explaining why I'm still on the fence with AI, and refuse to tip entirely into either refusal or full adoption. In this post, I'm pulling together some of the most read articles on this site from both sides of the fence, to add a little weight to my quite possibly precarious position. I honestly think that balance is the key: yes, Generative AI technologies are an ethical minefield, and yes, resistance and refusal are both sound options. But on the other […]https://leonfurze.com/2024/12/05/more-from-the-fence-articles-for-and-against-ai-in-education/

Show Original Post

2024 (@2024@leonfurze.com)

OpenAI is Coming for Writers

There's something brewing at OpenAI, and it's not their often promised AGI. While Altman seduces investors with the speculative promises of Artificial General Intelligence and ever more powerful versions of GPT, a very real play is being made for much more concrete territory. Over the past few months, a number of product releases and updates all point in the same direction: OpenAI doesn't see a place in its new world order for writers and teachers of writing. ChatGPT and the End of […]https://leonfurze.com/2024/11/21/openai-is-coming-for-writers/

Show Original Post

2024 (@2024@leonfurze.com)

The Near Future of Generative Artificial Intelligence in Education: Part Two

This post continues the exploration of emerging technologies affecting education, particularly focusing on offline AI, wearable technologies, and AI agents. The author emphasizes the accessibility and privacy benefits of running AI locally while discussing innovative advancements such as augmented reality glasses and autonomous AI agents, urging educators to adapt to these changes proactively.

Show Original Post

2024 (@2024@leonfurze.com)

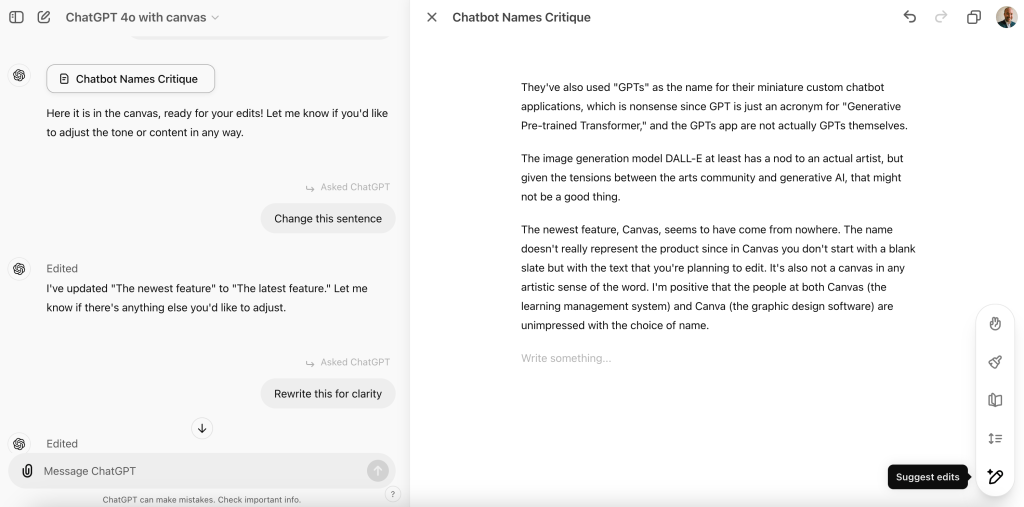

Hands on with ChatGPT Canvas

Canvas is OpenAI's latest addition to the ChatGPT interface, offering editing tools for text and code. It rolled out shortly after the release of the o1 model and Advanced Voice Mode for Plus subscribers, coinciding perfectly with OpenAI's latest fundraising round. Although it's far from perfect, the feature represents an important step for how AI developers are conceptualising the ways people use their tools. OpenAI's Naming Conventions Let's get this out of the way first: OpenAI is […]https://leonfurze.com/2024/10/16/hands-on-with-chatgpt-canvas/

Show Original Post

GuillaumeRossolini (@GuillaumeRossolini@infosec.exchange)

This weekend I finally caved and used #ChatGPT [1] to improve a program I’m working on [2]

The project

To recap the above thread this is a part of, I’m trying to build a mesh network of microcontrollers (ESP8266), each with an air quality sensor and they broadcast their readings so that a different microcontroller (ESP32) can forward them to a Raspberry Pi Zero for storage, statistics, visualization etc.

I have about a dozen of nodes and the network has started to fall apart (it was fine when I had 6), so I’m trying to find workarounds but I am unfamiliar with C++. This is where ChatGPT comes in.

The experience wasn’t smooth. It felt a little like chatting with a junior dev, fresh out of school and full of ideas about how a happy path algorithm should be written or how an ideal library should interface, but less concerned with the code in front of them.

How it started

I started by sharing the public URL for the .ino file (it’s an Arduino IDE project) on my GitHub and it looks like the bot used it as a search rather than a link, and it scanned the top results but didn’t find my code. It told me so in so many words. I had to share the link to the raw code instead. No biggie but a bit underwhelming.

(As an aside, I find it funny that the bot’s default search engine, Bing, was unable to locate a file from a public repository on GitHub based on its full URL. Both of these services are operated by Microsoft.)

Then, having just seen the code and after I expressed my desire for a code review, the bot proceeded to explain to me generalities of programming and best practice. Meh.

First code review

After a third prompt from me, it finally read my code but still provided several comments about things that aren’t in my program. I don’t use a display anywhere, so why the advice about OLED? (I know why, that was in one of the results in its first search, the one where it told me it hadn’t found my code). Of course I am indeed using the data with an output, mine being the mesh rather than a screen, but… Meh.

Out of the six initial suggestions, only the one about the macro was useful; although with the amount of memory at my disposal, that particular optimization wouldn’t matter.

Of the other recommendations, none applied to my program and the bot kept suggesting their suboptimal variant as fixes later on, contradicting itself numerous times. Every time I corrected its mistake, it praised me for my insight and gave my a bullet list of pros and cons, which felt a little patronizing, and then it proceeded to make the same mistake a few prompts later. And again later on.

General impressions

The bot failed to refactor my code at any point, instead giving me basic unfinished examples modified to fit the current recommendation. One of the things I was worried about was my use of the JSON library and how strings are manipulated. After all, this is C++ and with a headless device that won’t tell me anything when it crashes. I tried a bunch of times to get help from the bot, failed and moved on.

Every time I challenged one of the bot’s suggestions, it came back to me with an apology and that after further review my code didn’t need that particular change or with a generic phrase to not even try giving me a firm answer.

The one suggestion that looked like it could improve things, about deep sleep, ended up being a non starter because that would power down the sensor and I would have to restart the calibration phase on wake-up (that’s about 5 minutes for this sensor). The bot didn’t know to check what consequences the change would have.

Mixing software and hardware

This part was interesting. I was challenging the bot’s assumptions about deep sleep and how this specific sensor would behave in that case, and I told the bot to go read the code of the library for the sensor. I had to prompt this twice because the first time it came back with guesses. It looks like it did actually search for it, scanned the top results and came back with apparently more insight into the inner workings of the library. It didn’t find the authoritative source that I was using, but that’s a tough ask even for a human so I won’t hold this against it (it did manage to find two reasonable sources). Not meh and actually a decent attempt.

What the bot told me it found, was validating my existing bias so I didn’t keep pushing. I also didn’t go and verify by myself.

Trying variations on earlier prompts

I tried again to get the bot to review my code for errors. As was becoming the norm, at my first prompt I got only generic answers; when prompted again the bot had apparently forgotten all about my code; and when I reminded it that it did have access to my code, then it finally obliged. Its answer was predictably full of extensive praise about each thing that I did correctly (in fact I was surprised that there were no errors, but given that, the bot’s response was predictable). I’d have been fine with a short list of common errors it checked for and didn’t find, but ok.

Then I tried to have the bot refactor my code in a few ways. I wanted to see if it could suggest code to send the data over the mesh in one format while writing on Serial in another format. I already knew it was bad at rewriting existing code.

That was a fiesta of all the advice it had given me this far, and it applied the bad variant every time. It knew by then of my preference for the “guard” style but ignored it; it made several copies of each scalar; it didn’t rewrite my code at all, instead using some made up example; it kept using functions that it had warned me against.

Spite

I tried trolling the bot by suggesting deep sleep again, but it can’t do sarcasm and it tried its best to give me points any time it could, so it didn’t contradict me and praised me again for my insight.

I also tried to see if a pointed question with no actual consequence would get flagged but alas, the bot helpfully (not!) provided a pro/con list to help me decide for myself, not realizing how futile the decision was. This one wasn’t about sarcasm, it was about comparing two identical options and telling me this in no uncertain terms.

Brainstorming

This is how I generally find this kind of bot useful: tossing around ideas, having it formulate them in several ways to help me solidify my own understanding of them.

I still tried to get it to suggest code with the libraries I am using, but I stopped insisting on refactoring my actual code. I also gave up on fine tuning the code according to the code standards established earlier in the conversation. At this point I have zero faith in their Memories product before even trying it 😂

(Perhaps an error I made here was in not telling the bot to forget the first search results)

The bot did make some assertions that look like they can hold water and I’ll have to verify them.

At one point I failed to see a correct expression in the suggested code, and when I told the bot that it was missing that expression, it didn’t call me out on it. I had to read the new answer and figure out that it was the exact same suggestion a second time. That’s annoying.

Getting an idea that might be good

I eventually saw that when trying to reconnect to the WiFi, with gradual increments on the delay inbetween attempts, eventually we get to a delay that is so long that perhaps the microcontroller staying awake doesn’t make sense.

For example when I’m doing maintenance on the Raspberry Pi or on the root node, none of the sensor readings matter for a while. They can try reconnecting a few times on signal failure in case it’s just a blip or a reboot, but if they fail for too long, they should feel free to go in power saving mode and try again much later.

So I gave the bot a basic description of this algorithm and it provided code that we iterated on for a bit. At one point I gave up trying with words and I pasted code myself, which earned me deep praise again and a line by line explanation of how great my code was. Of course the bot was eager to improve on this anyway, but logic isn’t its forte…

Parting thoughts

Now I need to iterate on this on my own, since in my case the nodes with a sensor have no concept of my home WiFi and they can’t reach the rpi0, only the root node can do that. So that’s the only microcontroller that would save power in the event that my home WiFi went down. Big improvement! /s

As for the bot itself, while it got better at conversing since earlier versions, and it learned how to search the web, fetch content and parse it really fast, and it can produce code that sometimes works, it’s still much less useful than a coding partner of any amount of experience.

I can’t gauge its trustworthiness or reliability, even when it’s sharing the links that it scanned, without reading through the materials myself.

It still can’t keep its facts straight, although it has access to API documentation and/or actual source code, and it rarely knows when it doesn’t have enough information.

[1] https://chatgpt.com/share/670d8875-dc8c-8007-97b1-9938b82ed838

[2] https://github.com/GuillaumeRossolini/griotte

Show Original Post

2024 (@2024@leonfurze.com)

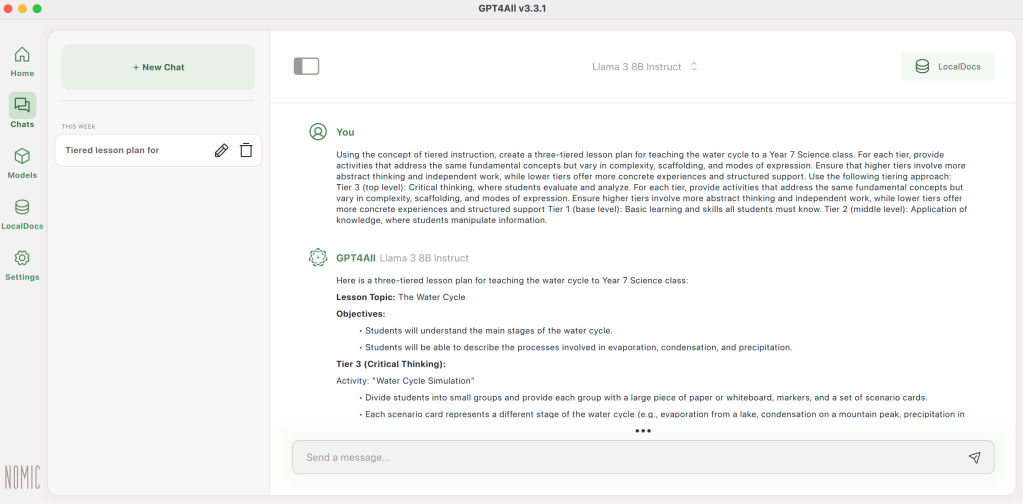

3 Ways for Educators to Run Local AI and Why You Should Bother

The article advocates for experimenting with local AI models, emphasising their accessibility and privacy benefits. It details three methods for setting up local AI: GPT4All for PCs, PocketPal for smartphones, and Ollama for command-line users. The author stresses the potential impact of local AI on education, questioning its implications for equity and academic integrity.https://leonfurze.com/2024/10/14/3-ways-for-educators-to-run-local-ai-and-why-you-should-bother/

Show Original Post

2024 (@2024@leonfurze.com)

More Practical AI Strategies: Differentiating

Educators continue to grapple with the ethical and practical implications of Generative AI, but it has proven valuable in enhancing teaching methods and student engagement. LLMs like ChatGPT can aid in visualising abstract concepts, refining editing skills, and providing constructive feedback, presenting numerous opportunities for its integration in education.https://leonfurze.com/2024/10/09/more-practical-ai-strategies-differentiating/

Show Original Post

2024 (@2024@leonfurze.com)

Which Tools to Use? A Resource List of GenAI for Educators

I get a lot of people asking me which GenAI apps to try out in workshops and professional learning sessions. Since the release of ChatGPT in November 2022, we've been flooded with different Generative AI applications. By now, you've probably seen those images of hundreds of Generative AI apps - intimidating looking lists of every app imaginable, including hundreds you'll never actually use. I prefer a more streamlined list, pointing out a few of the apps which I use myself for text, code, […]https://leonfurze.com/2024/10/02/which-tools-to-use-a-resource-list-of-genai-for-educators/

Show Original Post

2024 (@2024@leonfurze.com)

The Near Future of Generative Artificial Intelligence in Education: September 2024 Update

In my book Practical AI Strategies, released in January 2024, I dedicated the final chapter to the near future of generative artificial intelligence. I spoke about the convergence of multimodal technologies such as image, audio, and video generation, as well as improvements in automated coding, which would make it easier for people with no technical skills to create simple applications. All of those January predictions have come true in the past six months, and so it's time for an update […]

Show Original Post

staipa (@staipa@mastodon.uno)

Questo blog è creato con l'Intelligenza Artificiale https://www.staipa.it/blog/questo-blog-e-creato-con-lintelligenza-artificiale/?feed_id=1666&_unique_id=66eaafe314fbc

No. Cioè un po'. Questo blog è parzialmente scritto con l'Intelligenza Artificiale, ci hai fatto caso? A parte lo scherzo di qualche giorno fa (short.staipa.it/4icd9), è vero che utilizzo l'intelligenza artificiale in questo Blog.

Intanto voglio specificare una ...

#ChatGPT #giornalismo #GostWriters #IA #IntelligenzaArtificiale

Show Original Post

2024 (@2024@leonfurze.com)

Expertise Not Included: One of the Biggest Problems with AI in Education

The debate about the effectiveness of AI chatbots in education continues amidst rapid AI advancements. Despite the broad knowledge and potential of AI systems, learners need expertise to effectively utilize them. The challenge lies in knowing how to ask the right questions and identify inaccuracies. Customised, purposeful applications could provide a more beneficial alternative to generic AI chatbots.https://leonfurze.com/2024/09/18/expertise-not-included/

Show Original Post

openai-establishes-independent-safety-committee-without-altman-strengthening-transparency-and-safety-in-ai-development (@openai-establishes-independent-safety-committee-without-altman-strengthening-transparency-and-safety-in-ai-development@xenospectrum.com)

OpenAI、Altman氏が所属しない独立安全委員会を設立:AI開発の透明性と安全性を強化

OpenAIが、AI開発の透明性と安全性を強化するための画期的な取り組みを発表した。同社は、独立した安全・セキュリティ委員会を設立し、AI […]

Show Original Post