PruferJan (@PruferJan@mastodon.social)

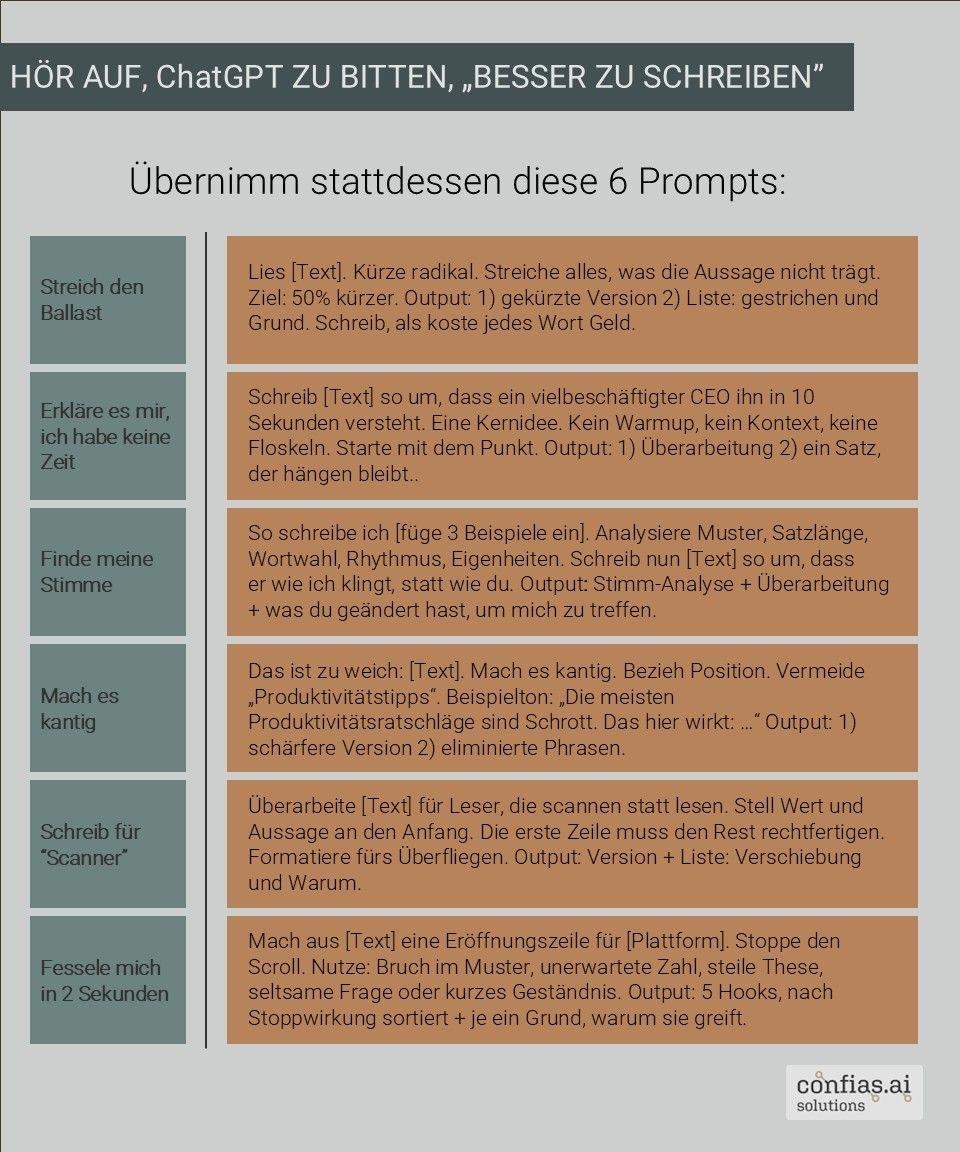

📝 Vage Prompts = generische Texte. Entscheidend ist das WIE, nicht das WAS. 6 Techniken für bessere KI Texte

👉 Meine Meinung: Iteratives Arbeiten schlägt perfekte Prompttechnik. Und: Gute Prompts ersetzen nie das eigene Denken! 💡

(Credits for idea to Ruben Hassid, 05.01.2026, via LinkedIn, “Stop telling ChatGPT to “write better””; Social Media-Bearbeitung: Confias AI Solutions;)

Show Original Post

azzatul (@azzatul@mastodon.social)

😘😘

.

.

.

#gadis #cantik #ai #chatgpt

Show Original Post

TeddyTheBest (@TeddyTheBest@framapiaf.org)

Face à #OpenAI, Anthropic injecte des données de #santé dans #Claude. Il y a quelques jours, OpenAI annonçait #ChatGPT Santé, sa solution #IA pour faire remonter des recommandations médicales personnalisées. (...)

https://www.lemondeinformatique.fr/actualites/lire-face-a-openai-anthropic-injecte-des-donnees-de-sante-dans-claude-99010.html

A lire aussi mon précédent pouet sur OpenAI à https://framapiaf.org/@TeddyTheBest/115880766087451249

Show Original Post

2026 (@2026@imagazine.pl)

Starcie gigantów AI: Gemini odbiera użytkowników ChatGPT. Google notuje imponujący wzrost

Dominacja OpenAI na rynku generatywnej sztucznej inteligencji zaczyna topnieć. Najnowszy raport firmy analitycznej Similarweb wskazuje, że Google Gemini systematycznie zwiększa swój udział w ruchu sieciowym, stając się realną konkurencją dla ChatGPT.

Choć lider wciąż jest jeden (OpenAI), dystans między dwoma technologicznymi potęgami wyraźnie się zmniejsza.

Według danych opublikowanych na początku stycznia 2026 roku, Google Gemini kontroluje już 21,5 proc. ruchu w segmencie produktów generatywnego AI. W tym samym czasie udział rynkowy ChatGPT spadł do poziomu 64,5 proc. Oznacza to, że te dwa narzędzia zdominowały rynek, odpowiadając łącznie za około 86 proc. całego ruchu w tej kategorii.

Pozostałe 14 proc. tortu dzielą między siebie mniejsi gracze, tacy jak DeepSeek, Grok należący do platformy X, Perplexity, Claude oraz Microsoft Copilot.

Skuteczna strategia Google

Wzrost popularności narzędzia Google nie jest dziełem przypadku, lecz efektem konsekwentnej strategii realizowanej przez cały 2025 rok. Jeszcze rok temu udział Gemini wynosił zaledwie 5,7 proc., by w grudniu 2025 roku wzrosnąć do 18,2 proc.

Kluczowym momentem okazała się decyzja giganta z Mountain View o udostępnieniu zaawansowanych funkcji, takich jak eksperymentalny model Gemini 2.5 Pro, szerokiemu gronu odbiorców bezpłatnie. Agresywna polityka cenowa i szybkie wdrażanie nowości pozwoliły Google na skuteczne „podgryzanie” lidera.

Duopol na rynku AI

Dane Similarweb wyraźnie pokazują, że rynek chatbotów AI zmierza w kierunku duopolu. Choć OpenAI wciąż posiada przewagę „pierwszego ruchu”, która pozwoliła jej zgromadzić 700 milionów aktywnych użytkowników miesięcznie, Google nadrabia zaległości w ekspresowym tempie. Apple również próbuje zaznaczyć swoją obecność w tym sektorze, jednak na ten moment to starcie między twórcami ChatGPT a właścicielem najpopularniejszej wyszukiwarki napędza cały sektor.

Obie firmy nie zamierzają zwalniać tempa rozwoju. Google integruje swoje AI z usługami telewizyjnymi, tworząc z Gemini domowego asystenta, podczas gdy OpenAI stawia na specjalizację, wprowadzając ChatGPT Health – dedykowane rozwiązanie wspierające użytkowników w analizie danych medycznych. Rywalizacja o uwagę użytkownika w 2026 roku zapowiada się zatem niezwykle interesująco.

#chatboty #ChatGPT #GoogleGemini #news #OpenAI #rynekAI #Similarweb #statystykiAI #sztucznaInteligencjaChatGPT Health z integracją Apple Health – nowa funkcja OpenAI

Show Original Post

abucci (@abucci@buc.ci)

Regarding the ideological nature of what's at play, it's well worth looking more into ecological rationality and its neighbors. There is a pretty significant body of evidence at this point that in a wide variety of cases of interest, simple small data methods demonstrably outperform complex big data ones. Benchmarking is a tricky subject, and there are specific (and well-chosen, I'd say) benchmarks on which models like LLMs perform better than alternatives. Nevertheless, "less is more" phenomena are well-documented, and conversations about when to apply simple/small methods and when to use complex/large ones are conspicuously absent. Also absent are conversations about what Leonard Savage--the guy who arguably ushered in the rise of Bayesian inference, which makes up the guts of a lot of modern AI--referred to as "small" versus "large" worlds, and how absurd it is to apply statistical techniques to large worlds. I'd argue that the vast majority of horrors we hear LLMs implicated in involve large worlds in Savage's sense, including applications to government or judicial decisionmaking and "companion" bots. "Self-driving" cars that are not car-skinned trains are another (the word "self" in that name is a tell). This means in particular that applying LLMs to large world problems directly contradicts the mathematical foundations on which their efficacy is (supposedly) grounded.

Therefore, if we were having a technical conversation about large language models and their use, we'd be addressing these and related concerns. But I don't think that's what the conversation's been about, not in the public sphere nor in the technical sphere.

All this goes beyond AI. Henry Brighton (I think?) coined the phrase "the bias bias" to refer to a tendency where, when applying a model to a problem, people respond to inadequate outcomes by adding complexity to the model. This goes for mathematical models as much as computational models. The rationale seems to be that the more "true to life" the model is, the more likely it is to succeed (whatever that may mean for them). People are often surprised to learn that this is not always the case: models can and sometimes do become less likely to succeed the more "true to life" they're made. The bias bias can lead to even worse outcomes in such cases, triggering the tendency again and resulting in a feedback loop. The end result can be enormously complex models and concomitant extreme surveillance to acquire data to feed data the models. I look at FORPLAN or ChatGPT, and this is what I see.

#AI #GenAI #GenerativeAI #LLM #GPT #ChatGPT #LatentDiffusion #BigData #EcologicalRationality #LessIsMore #Bias #BiasBias

Show Original Post

strangequark (@strangequark@dice.camp)

The #HerosJourney of #VibeCoding

#AI #LLM #ChatGPT #Claude #Programming #SoftwareEngineering

Show Original Post

w (@w@peertube.gravitywell.xyz)

The UN Made AI-Generated Refugees

https://peertube.gravitywell.xyz/w/4ii53nSfAMnc3YBSVHv8Ju

Show Original Post

w (@w@peertube.gravitywell.xyz)

Pro-AI Subreddit Bans 'Uptick' of Users Who Suffer from AI Delusions

https://peertube.gravitywell.xyz/w/mpivRJBJF2JKR7Ttjadhor

Show Original Post

w (@w@peertube.gravitywell.xyz)

Teachers Are Not OK After ChatGPT

https://peertube.gravitywell.xyz/w/k3dbKBS6wfrZEMZqiCj57Y

Show Original Post

w (@w@peertube.gravitywell.xyz)

Why Do Technocrats Want AI Consciousness?

https://peertube.gravitywell.xyz/w/4JVwxTmaUHHnJCTQmHG5Ht

Show Original Post

abucci (@abucci@buc.ci)

I proposed two talks for that event. The one that was not accepted (excerpt below) still feels interesting to me and I might someday develop this more, although by now this argument is fairly well-trodden and possibly no longer timely or interesting to make. I obviously don't have the philosophical chops to make an argument at that level, but I'm fascinated by how this technology is so fervently pushed even though it fails on its own technical terms. You don't have to stare too long to recognize there is something non-technical driving this train. "The technologist with well-curated data points knocks chips of error off an AI model to reveal the perfect text generator latent within" is a pretty accurate description and is why I jokingly suggested someone should register the galate.ai domain the other day. If you're not familiar with the Pygmalion myth (in Ovid), check out the company Replika and then Pygmalion to see what I'm getting at. pygmal.io is also available!

Anyway:

ChatGPT and related applications are presented as inevitable and unquestionably good. However, Herbert Simon’s bounded rationality, especially in its more modern guise of ecological rationality, stresses the prevalence of “less is more” phenomena, while scholars like Arvind Narayanan (How to Recognize AI Snake Oil) speak directly to AI itself. Briefly, there are times when simpler models, trained on less data, constitute demonstrably better systems than complex models trained on large data sets. Narayanan, following Joseph Weizenbaum, argues that tasks involving human judgment have this quality. If creating useful tools for such tasks were truly the intended goal, one would reject complex models like GPT and their massive data sets, preferring simpler, less data intensive, and better-performing alternatives. In fact one would reject GPT on the same grounds that less well-trained versions of GPT are rejected in favor of more well-trained ones during the training of GPT itself.#AI #GenAI #GenerativeAI #GPT#ChatGPT #OpenAI #Galatea #Pygmalion

How then do we explain the push to use GPT in producing art, making health care decisions, or advising the legal system, all areas requiring sensitive human judgment? One wonders whether models like GPT were never meant to be optimal in the technical sense after all, but rather in a metaphysical sense. In this view an optimized AI model is not a tool but a Platonic ideal that messy human data only approximates during optimization. As a sculptor with well-aimed chisel blows knocks chips off a marble block to reveal the statuesque human form hidden within, so the technologist with well-curated data points knocks chips of error off an AI model to reveal the perfect text generator latent within. Recent news reporting that OpenAI requires more text data than currently exists to perfect its GPT models adds additional weight to the claim that generative AI practitioners seek the ideal, not the real.

Show Original Post

r (@r@fed.brid.gy)

'Shame Thrives in Seclusion:' How AI Porn Chatbots Isolate Us All

https://fed.brid.gy/r/https://www.404media.co/ai-porn-chatbots-erotic-chatgpt-noelle-perdue/

Show Original Post