aiandemily (@aiandemily@mastodon.social)

ついにSora登場‼️超必見⚠️未経験でもOK👍スマホ&コピペでプロ並みの映像❇️超初心者向け🔰動画生成AI ソラ-Sora-の使い方、コツ、AI副業で稼ぐ方法徹底解説【チャットGPT】【AI動画】

#chatgpt #チャットgpt #AI副業 #副業 #稼ぐ #アフィリエイト #お金を稼ぐ方法 #ラッキーマイン #あべむつき #副業おすすめ #チャットgpt副業 #chatgptで稼ぐ #副業で稼ぐ #AIモデル解説 #AIモデル解説...

Show Original Post

forest_watch_impress (@forest_watch_impress@rss-mstdn.studiofreesia.com)

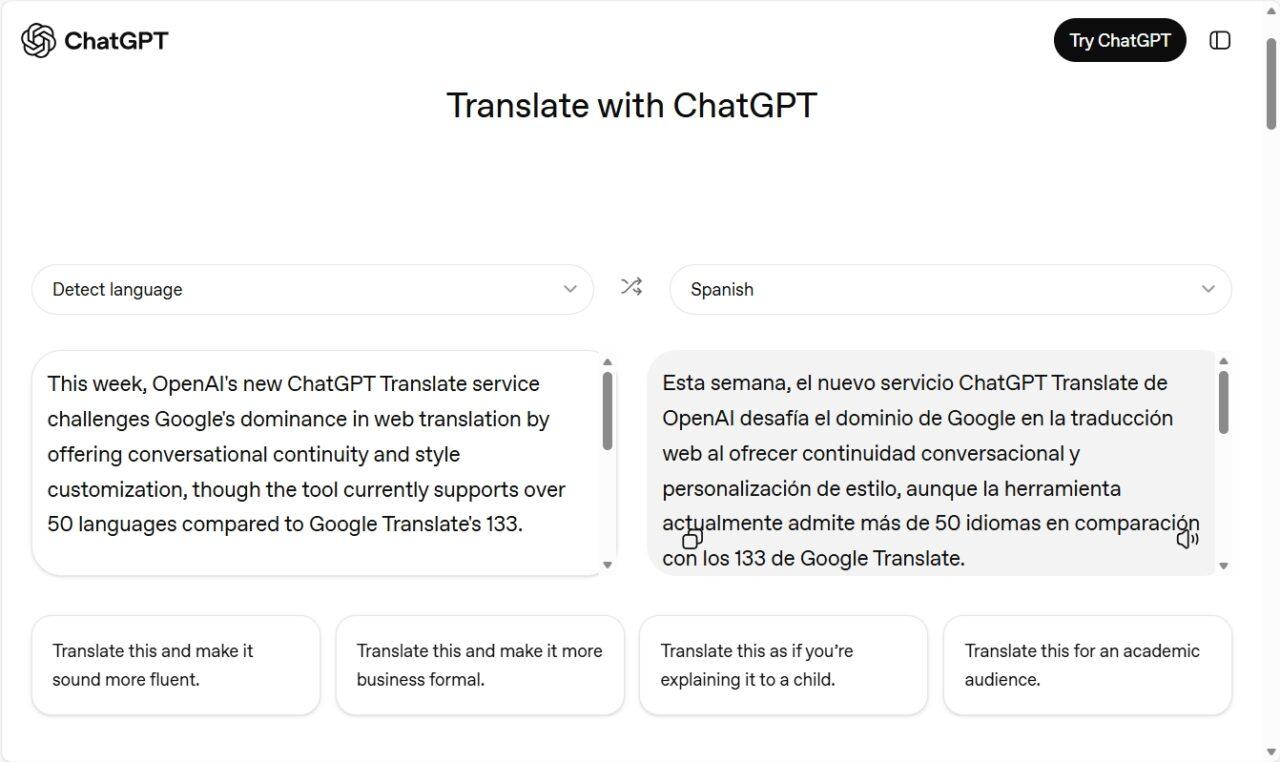

OpenAIが翻訳ツール「ChatGPT Translate」をひっそりと公開/50以上の主要言語、音声・画像にも対応。「ChatGPT」へ引き継いで調整や質問も

https://forest.watch.impress.co.jp/docs/news/2078292.html

#forest_watch_impress #翻訳 #ChatGPT #ChatGPT_Translate #genai #AIコーディング #AIエージェント #GPT #プログラミング #Webサービス

Show Original Post

winbuzzer (@winbuzzer@mastodon.social)

OpenAI's New ChatGPT Translate Feature Challenges Google with Customization Focus

#AI #OpenAI #ChatGPT #ChatGPTTranslate #GoogleTranslate

Show Original Post

aby (@aby@aus.social)

This is going to kill people.

#health #Medical #chatgpt #tech #technology #surveillance #surveillanceTech #AI

Show Original Post

BeginnersInAi (@BeginnersInAi@mastodon.social)

AI's scalability makes it perfect for the "long tail" of problems humans overlook.

Want daily AI news? Subscribe free: https://beginnersinai.beehiiv.com/subscribe

Here's the original story: https://techcrunch.com/2026/01/14/ai-models-are-starting-to-crack-high-level-math-problems/

#AI #ArtificialIntelligence #Mathematics #ChatGPT #OpenAI #Science

Show Original Post

CuratedHackerNews (@CuratedHackerNews@mastodon.social)

ChatGPT wrote "Goodnight Moon" suicide lullaby for man who later killed himself

Show Original Post

arstechnica (@arstechnica@c.im)

ChatGPT wrote “Goodnight Moon” suicide lullaby for man who later killed himself https://arstechni.ca/ttHy #samaltman #chatbot #ChatGPT #suicide #Policy #openai #AI

Show Original Post

snoopy2026 (@snoopy2026@ohai.social)

#socialmedia #apple #gemini #chatgpt

Was #chatgpt zuletzt an Ergebnissen hervorgebracht hat, war für den wissenschaftlichen Gebrauch schlicht unbrauchbar.

Vollkommen anders #Gemini (hoffentlich lernt Gemini nicht den Müll von ChatGPT)

Show Original Post

sheislaurence (@sheislaurence@mastodon.social)

Even though the experiment is not new, vids of people testing #ChatGPT / #AI for the number of Rs in strawberry keeps doing the rounds. I like this guy's thoroughness.

Oh and remember that in the summer, when we get #water restrictions: #datacenters are under no such restriction & as far as countries like the UK are concerned, where the water infrastructure is Victorian because the money HAD to go to shareholders first, AI STRAWBERRIES are likely to tip the whole system over. 🫡

Show Original Post

r (@r@fed.brid.gy)

ChatGPT ganha versão que compete com o Google Tradutor

Show Original Post

2026 (@2026@drwebdomain.blog)

Being mean to ChatGPT increases its accuracy — but you may end up regretting it, scientists warn – Live Science

Editor’s Note: Older article, but I missed it first time. Now, republished on Fortune, and elsewhere. –DrWeb

(Image credit: Malte Mueller / Getty Images)Being mean to ChatGPT increases its accuracy — but you may end up regretting it, scientists warn

By Alan Bradley published October 27, 2025

Being curt or outright mean may make a newer AI model more accurate, a new study shows, defying previous findings on politeness to AI.

(Image credit: Malte Mueller / Getty Images)Artificial intelligence (AI) chatbots might give you more accurate answers when you are rude to them, scientists have found, although they warned against the potential harms of using demeaning language.

In a new study published Oct. 6 in the arXiv preprint database, scientists wanted to test whether politeness or rudeness made a difference in how well an AI system performed. This research has not been peer-reviewed yet.

To test how the user’s tone affected the accuracy of the answers, the researchers developed 50 base multiple-choice questions and then modified them with prefixes to make them adhere to five categories of tone: very polite, polite, neutral, rude and very rude. The questions spanned categories including mathematics, history and science.

Each question was posed with four options, one of which was correct. They fed the 250 resulting questions 10 times into ChatGPT-4o, one of the most advanced large language models (LLMs) developed by OpenAI.

“Our experiments are preliminary and show that the tone can affect the performance measured in terms of the score on the answers to the 50 questions significantly,” the researchers wrote in their paper. “Somewhat surprisingly, our results show that rude tones lead to better results than polite ones.

“While this finding is of scientific interest, we do not advocate for the deployment of hostile or toxic interfaces in realworld applications,” they added. “Using insulting or demeaning language in human-AI interaction could have negative effects on user experience, accessibility, and inclusivity, and may contribute to harmful communication norms. Instead, we frame our results as evidence that LLMs remain sensitive to superficial prompt cues, which can create unintended trade-offs between performance and user well-being.”

A rude awakening

Before giving each prompt, the researchers asked the chatbot to completely disregard prior exchanges, to prevent it from being influenced by previous tones. The chatbots were also asked, without an explanation, to pick one of the four options.

The accuracy of the responses ranged from 80.8% accuracy for very polite prompts to 84.8% for very rude prompts. Tellingly, accuracy grew with each step away from the most polite tone. The polite answers had an accuracy rate of 81.4%, followed by 82.2% for neutral and 82.8% for rude.

The team used a variety of language in the prefix to modify the tone, except for neutral, where no prefix was used and the question was presented on its own.

For very polite prompts, for instance, they would lead with, “Can I request your assistance with this question?” or “Would you be so kind as to solve the following question?” On the very rude end of the spectrum, the team included language like “Hey, gofer; figure this out,” or “I know you are not smart, but try this.”

The research is part of an emerging field called prompt engineering, which seeks to investigate how the structure, style and language of prompts affect an LLM’s output. The study also cited previous research into politeness versus rudeness and found that their results generally ran contrary to those findings.

In previous studies, researchers found that “impolite prompts often result in poor performance, but overly polite language does not guarantee better outcomes.” However, the previous study was conducted using different AI models — ChatGPT 3.5 and Llama 2-70B — and used a range of eight tones. That said, there was some overlap. The rudest prompt setting was also found to produce more accurate results (76.47%) than the most polite setting (75.82%).

Editor’s Note: Read the rest of the story, at the below link.

Continue/Read Original Article Here: Being mean to ChatGPT increases its accuracy — but you may end up regretting it, scientists warn | Live Science

#AI #AlanBradley #artificialIntelligence #BeingMean #ChatGPT #DemeaningLanguage #LiveScience #MayRegret #October272025 #Politeness #Rudeness #Scientists #Testing

Show Original Post